The increased prevalence of disinformation especially on online platforms has created a vexing problem with various harms. Recent developments in artificial intelligence (AI) will likely deteriorate the situation. In order to solve or even mitigate the problem, we must first understand the issue’s complexity. This calls for a multidisciplinary approach. In this article, I’ll first describe the question and concerns in solving it, and finally, I will contemplate what kind of research and development for solutions is needed.

Author: Harri Heikkilä

The idealistic idea of the Internet as an information highway, as Bill Gates envisioned at the start of the 1990s, has been seriously broken by the current level of false news, hate speech and conspiracy theories on the web. Research has revealed that disinformation gets shared way wider and faster on the Internet than truthful information (Vosoughi et al. 2018), and algorithms tend to favour aggravated discourses. It is apparent that in the foreseeable future, no relief in this tendency will take place; on the contrary, the situation can deteriorate further. Why? First, the new and ubiquitous language models can believably imitate any language (Robins 2023), thus liberating the troll farms from the effort to localise messages; typos or broken English (or Finnish) will no longer unveil the remote malevolent influencer. Building language models is already cheap and will get cheaper in the future (Beauchamp-Mustafaga & Marcellino 2023).

Secondly, with recent technological developments, it is even more effortless to create fake accounts and AI-generated avatars and start targeted mass posting – or even mass calling (Rugge 2020). Furthermore, the generative adversarial networks are becoming so sophisticated that algorithms will soon produce indistinguishable bot content from that produced by humans (Goswami 2022).

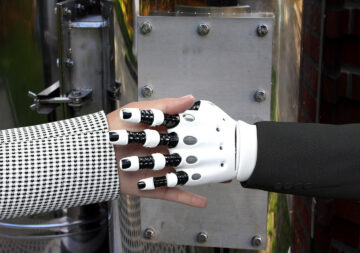

Finally, creating deceptive images does not require photo editing skills in the time of generative AI; the desired composition can be relatively effortlessly created with promting, and the result can be effective (see picture 1). The quality of generated images and video is increasing rapidly, making detection more difficult.

Thus the manipulation will likely be more rapid, more scalable, more affordable, and probably more believable and more significant, thanks to the generative AI. (Beauchamp-Mustafaga & Marcellino 2023).

Picture 1: During the immigration crisis of November 2023 on the eastern border of Finland, Finnish politician Reijo Tossavainen (@ReijoTossavaine) published an AI-created picture (see Lippu 2023, Järveläinen 2023) in X-service

Fighting AI with AI

Montoro-Montarroso et al. (2023) and Santos (2023) have looked at the intriguing possibility of using AI and machine learning (ML) to solve the undesirable issues created by AI and ML. The idea is to use advanced language and sentiment analysis algorithms that can be turned against the problem by exposing the disinformation using, for example, sorting and labelling. Although I find this approach exciting, disinformation is far from binary and, thus, quite hard to classify reliably. And even if this would be possible with the human-in-the-loop -approach described by Santos (2023) it is hard to implement in social media.

One has to bear in mind, that media is platform-based; filtering and labelling information have to be done within the platform, and as we can see in the recent developments of X-platform, platforms are not always interested in this because it contradicts the idea of gaining the maximum audience. Filtering is always a tradeoff: if social media companies are too expansive in what they classify as disinformation, they risk silencing users who post accurate information. If companies are too narrow in their classifications, disinformation attacks can go undetected (Villasenor 2020, 108–109). Regulating the AI is another question. It is unlikely ever to be universally regulated (Beauchamp-Mustafaga & Marcellino 2023).

What makes it worse, studies have found that the share of online users who visit false news sites directly is quite limited, whereas disinformation draws a disproportionate attention on social networks (Graves 2018), thus, at the best, sorting and labeling -approach would leave the majority of disinformation unaffected.

It would be beneficial to admit that disinformation is not merely an algorithmic issue but, to the greatest extent, a psychological or social-psychological and political matter and has its roots in media change and societal transformations. The starting point should be that disinformation possesses an inheritably complex nature that can’t be solved effectively only by automatic sorting, labelling and exposing disinformation. It is a human problem which requires a human solution, which is not easy either – humans even are more complicated than algorithms, and there are variety psychological and cultural issues to consider.

Psychological and cultural developments involved

- Disinformation is fact-resistant

Debunking efforts or correcting misinformation seems straightforward and logical at first glance, but various research has proved the approach ineffective (Miller 2023). Repetitioning false statements, even in the context of fact checks, can reinforce rather than undermine belief in the false information (Hasen 2022, 163). The disinformation is fact-resistant because – paradoxically – it is not often about the facts but about identity. In fact, Diaz Ruiz and Nilsson (2023) claim that identity-driven controversies are nowdays prime vehicles to circulate disinformation: “Authoritative corrections that set the record straight are insufficient and can backfire because identity and cultural positions cannot be disproved; they are impervious to fact-checking.” The problem remains that identity is undoubtedly enforced in the echo chambers and can lead to radical directions (ibid. 32–33). - Role-playing and libertarian ethos are inherited parts of the internet

Turkle (1997) claimed already in the 1990s that the web is a theatre where you take roles. Your web self, your avatar, can be a different person who acts with various persons who are not the same person in real life. Da Empoli (2019) has gone further, arguing that social media is not only a theatre with roles but a classic carnival, where people compete to see who can more lubriciously attack “the establishment”. In a way, the political debate is a permanent day of the false king. Everyone is invited to participate in trampling norms under the protection of masks. The equivalent of carnival masks is now shooting from the cover of avatars. (Da Empoli 2019) Masking of identity is built into the structure of internet communication (Hasen 2022, 82). With this anonymous role-playing, it is also easier to distance oneself from the consequences of aggression. It is evident that fact-checking and regulations are problematic in such an anarchistic or libertal environment. The idea of regulation is often seen as a solution but also as a problem from the point of free speech (Diaz Ruiz & Nilsson 2023, 32). - The irreversible media change increases the responsibility of the reader

Abrahamson (2019) argues that the root cause for the dissimilation of false news is that the Internet destroyed the newspaper business model and quality reporting: metrics of popularity have replaced quality in newspapers. This means that responsibility for correct information lies not anymore with reliable and reputable media but more and more with consumers themselves. The decision of what to share on social media is crucial. - Reading habits have changed

The fundamental point of McLuhan (1994) was that media is an extension of man; when the media changes, we change. The problem of fact-checking by the great audience is that the facts require concentration – which has been deteriorated by the very same media development that has increased the need for it. The load on our minds has multiplied, and simultaneously, the lack of concentration that the new media reading style, social media and apps have created has increased. Huhtinen (2023) points out that the consumers who are particularly vulnerable to information pollution are those who lack language skills, have weak knowledge of the cultural layers of society, and are generally outsiders. In sum, those who need quality the most are left out of quality information. People are now and, in the future, more viewers than readers. They tend to skim the texts, and we only move to reading when necessary (Krug 2006). Users read the website’s text on average only 20 per cent (Nielsen 2008). The information should be built to work better with the new “scanning and glancing”-reading style.

Designing a human counterstrategy

Since we cannot change how social media works or affect the metrics of news media, and debunking is ineffective at least in echo chambers, nor are we able to imply regulation worldwide or willing to flirt with censorship nationally, solutions that remain effective are primarily focused on pre-vaccination. Creating awareness of disinformation and detecting contaminated data should be targeted to non-partisan audience, bearing the human mind in mind. Methods should be provided for people to do fact-checking themselves instead of relying on authority-based corrections or controls. To sum it up, the change has to involve people themselves. Researchers and developers have to provide tools to facilitate this change.

We need a research project that focuses on finding new creative ways to heighten the critical attitude towards information within the audiences and especially within students for example by making it easier – perhaps even fun – to recognise the patterns of false news. And here Ai could help. There is no uniform solution, but I have listed here the problems found and some examples of rudimentary solutions that need to be researched further. Epistemology could be advanced by creating an interactive, gamified tool which conducts users to utilise classic models of argumenting, like the Tolumin-model (University of Toronto), and results could be visualised as interactive flow diagrams. AI could also generate questions about selected content. Reading – especially long texts should be promoted and understanding the text could be facilitated by creating layouts that make the text more readable and legible following the Gestalt laws and accessibility standards.

| PROBLEM | DEVELOPED AI-SUPPORTED SOLUTION |

| Epistemology | Students: Argumenting using, for example, Tolumin-models and infographics |

| Reliability | Readers: Questions generated from content, including images and video, for example, via browser plug-in |

| Reading habits | Publishers: Improving content via Gestalt-heuristics and accessibility standards, Creating interfaces which facilitate long reading |

Table 1. AI supported solutions

There should be an intention to integrate the possibilities of new developments in technological humanism (Digital Future Society 2022; Heikkilä 2023a; Heikkilä 2023b), which shares the aspirations of enhancing media literacy, creating common ground for dialogue, and strengthening social integration by contributing to combating disinformation, hate speech and fake news to defend the democratic values and stability of societies. It is no exaggeration to state that these are endangered in the current levels of disinformation. (McKay & Tenove; Rugge 2020)

References

Beauchamp-Mustafaga, N. & Marcellino, B. 2023. The US Isn’t Ready for the New Age of AI-Fueled Disinformation—But China Is. Time. Cited 27 Nov 2023. Available at https://time.com/6320638/ai-disinformation-china/

Da Empoli, G. 2019. Les ingénieurs du chaos. Paris: JC Lattès.

Diaz Ruiz, C. & Nilsson, T. 2023. Disinformation and Echo Chambers: How Disinformation Circulates on Social Media Through Identity-Driven Controversies. Journal of Public Policy & Marketing. Vol. 42 (1), 18–35. Cited 11 Nov 2023. Available at https://doi.org/10.1177/07439156221103852

Digital Future Society. 2022. Reflections on what would mean for Barcelona to become the capital of technological humanism. Cited 1 Dec 2023. Available at https://digitalfuturesociety.com/app/uploads/2022/03/Reflections_Tech_Humanism_BCN.pdf

Goswami, A. 2022. Is AI the only antidote to disinformation? World economic forum: Artifical intelligence. Cited 28 Nov 2023. Available at https://www.weforum.org/agenda/2022/07/disinformation-ai-technology/

Graves, L. 2018. Understanding the Promise and Limits of Automated Fact-Checking. Factsheet 2018. Cited 10 Dec 2023. Available at https://ora.ox.ac.uk/objects/uuid:f321ff43-05f0-4430-b978-f5f517b73b9b/download_file?file_format=application%2Fpdf&safe_filename=graves_factsheet_180226%2BFINAL.pdf&type_of_work=Reportt

Hasen, R.L. 2022. Cheap speech: how disinformation poisons our politics-and how to cure it. New Haven London: Yale University Press.

Heikkilä, H. 2023a. Towards unifying human design principles for the IOT-era. LAB RDI Journal. Cited 4 Dec 2023. Available at https://www.labopen.fi/en/lab-rdi-journal/towards-unifying-human-design-principles-for-the-iot-era/

Heikkilä, H. 2023b. Muotoiluinstituutin digitaalisen kokemuksen linja yhdistää palvelumuotoilua ja UX/UI-suunnittelua uudesta kulmasta. Cited 11 Dec 2023. Available at

https://www.labopen.fi/lab-pro/muotoiluinstituutin-digitaalisen-kokemuksen-linja-yhdistaa-palvelumuotoilua-ja-ux-ui-suunnittelua-uudesta-kulmasta/

Huhtinen, A-M. 2023. Informaation saastuminen on uhka demokratialle. Helsingin Sanomat 3.12.2023. Cited 3.12.2023. Available at https://www.hs.fi/mielipide/art-2000010019578.html

Järveläinen, V. 2023. Heittääkö ex-kansanedustaja bensaa liekkeihin feikkikuvalla? Otos ”rajalta” näyttää oudolta. Iltalehti 24.11.2023. Viitattu 13.12.2023. Saatavissa https://www.iltalehti.fi/digiuutiset/a/db3cd41a-fca6-40bf-89c8-249fd2f3dee2

Krug, S. 2006. Don’t make me think! : a. common sense approach to web usability. 2nd edition. Berkeley, Calif.: New Riders.

Lippu, A-M. 2023. Kunnallispoliitikko jakoi tekoälykuvia ”pakolaisista” – Näin tunnistat valheellisen kuvan. Helsingin Sanomat 30.11.2023. Viitattu 13.12.2023. Saatavissa https://www.hs.fi/kulttuuri/art-2000010022166.html

McKay, S. & Tenove, C. 2021. Disinformation as a Threat to Deliberative Democracy. Political Research Quarterly. Vol. 74 (3), 703–717. Cited 4 Dec 2023. Available at https://doi.org/10.1177/1065912920938143

McLuhan, M. 1994. Understanding media : the extensions of man. Cambridge, Mass.: MIT Press.

Miller, M.K. (ed.) 2023. The social science of QAnon: a new social and political phenomenon. Cambridge, United Kingdom New York, NY, USA Port Melbourne, VIC, Australia New Delhi, India Singapore: Cambridge University Press. Cited 2 Dec 2023. Available at https://doi.org/10.1017/9781009052061

Montoro-Montarroso, A., Cantón-Correa, J., Rosso, P., Chulvi, B., Panizo-Lledot, Á, Huertas-Tato, J., Calvo-Figueras, B., Rementeria, M. J. & Gómez-Romero, J. 2023 Fighting disinformation with artificial intelligence: fundamentals, advances and challenges. El profesional de la informacion. 32 (3). Cited 3 Dec 2023. Available at https://doi.org/10.3145/epi.2023.may.22

Nielsen, J. 2008. How Little Do Users Read? Cited 3 Dec 2023. Available at https://www.nngroup.com/articles/how-little-do-users-read/

@ReijoTossavaine. 2023. (Reijo Tossavainen). X-mikroblogipalvelu. Saatavilla https://twitter.com/ReijoTossavaine/status/1727361474035871751

Robins, E. 2023. Disinformation reimagined: how AI could erode democracy in the 2024 US elections. Guardian. Cited 19 Oct 2023. Available at https://www.theguardian.com/us-news/2023/jul/19/ai-generated-disinformation-us-elections

Rugge, F. 2020. AI in the Age of Cyber-Disorder. IT: Ledizioni. Cited 27 Nov 2023. Available at https://doi.org/10.14672/55263832

Santos, F.C.C. 2023. Artificial Intelligence in Automated Detection of Disinformation: A Thematic Analysis. Journal. Media 2023, 4, 679-687. Cited 10 Dec 2023. Available at https://doi.org/10.3390/journalmedia4020043

Turkle, S. 1997. Life on the Screen: identity in the age of the Internet. London: Phoenix.

University of Toronto. Toulmin Model of Argumentation. Cited 3 Dec 2023. Available at http://individual.utoronto.ca/ecolak/EBM/evidence_and_eikos/models_of_argumentation/toulmin/toulmin.htm

Villasenor, J. 2020. How To Deal with Al Enabled Disinformation? in Rugge, F. (ed.) AI in the Age of Cyber-Disorder. IT: Ledizioni. Cited 27 Nov 2023. Available at https://doi.org/10.14672/55263832

Vosoughi, S., Roy, D. & Aral, S. 2018. The spread of true and false news online. Science. Vol. 359 (6380), 1146–1151. Cited 9.3.2018 Available at https://doi.org/10.1126/science.aap9559

Author

Harri Heikkilä PhD (arts), MSc (sociology) is principal lecturer (Visual communication, UX/UI) at LAB University of Applied Sciences, Institute of Design and Fine Arts preparing participation for Creative Europe Programme (CREA).

Illustration: Midjourney, edited by Harri Heikkilä

Published 13.12.2023

Reference to this article

Heikkilä, H, 2023. Disinformation is a human problem, it needs a human solution design. LAB RDI Journal. Cited and the date of citation. Available at https://www.labopen.fi/lab-rdi-journal/disinformation-is-a-human-problem-it-needs-a-human-solution-design/